After you have successfully got yourself a domain name and hosting, got yourself the desired theme, installed some useful plugins, and made a blog on your WordPress site; it’s time to check if your site is properly indexed or not.

If you are not familiar with the process of domain registration. Or what to consider for getting the best hosting for your website. We have already created insightful articles on these topics and checking them will be worthy of your time. If you have completed these steps successfully, you need to know the rules for indexing your website properly and quickly in Google.

Search engines are like books. And just like any book, they follow indexing. Although a book index contains a topic with a page number, search engines generate an index based on what different websites have written about a particular topic.

How long does it take to index a new website? How to index websites on Google? We will answer all these questions in this article. Also, we will discuss some indexing-related topics that you need to know to properly understand the process. Understanding the process is crucial, as it can help you to index quickly.

13 Simple ways how to get Google index your site Instantly –

Themeim will assure you website indexing is easy. You can get Google to index your site just by following these 13 simple steps –

1. Use Proper SEO tools:

Most people use a variety of plugins to make SEO-related work easier. While there are tons of options, we highly suggest using Yoast SEO or RankMath. These plugins are free to download and pack the basic needs.

Some of you may have heard of the Yoast SEO plugin or some of you may already be using it. It has been available for a while and it has been able to prove useful for its outstanding SEO performance.

Many use the premium version of this plugin to enjoy more features. This means the free version was quite fascinating for them, that’s why they were encouraged to purchase the premium one.

Furthermore, submitting sitemap XML will be easier with Yoast. The name of the sitemap file will be like sitemap_index.xml. Along with this, robot.txt files can be optimized using this plugin.

RankMath might be relatively new but it is gaining its position by ensuring reliable services. The best part about this tool is – it integrates with the Google Search Console. RankMath is not only good for local SEO but also good for image SEO, WooCommerce SEO, and more.

Along with that, this plugin has a built-in SEO optimizer that can be turned on and off based on your requirement. Although Yoast can be a reputed platform, many claims that this plugin doesn’t work so well on the Genesis framework. RankMath can be a more suitable choice for such scenarios.

If you want to know more about these types of plugins, we would suggest reading our blog on the best WordPress plugins.

Well, the best part is, that you don’t even need to install a plugin to use Google Search Console. Google has decided to make this tool available to the user without any cost. The tool was formerly known as Google Webmaster Tools. Even today, people are familiar with this term. Let’s just say, it is the most valuable tool for SEO.

Now, let’s demonstrate using the Google Search Console to get Google to index your site.

Create an account or log in with your existing Gmail account in Google Search Console. You will notice a button defined as “Add a property”. Click the button to type your domain name. After providing your domain name, you have to click the “Add” button”.

You will be redirected to a new page to verify the ownership of your property –

On the page, you will find an HTML file verification method. Just go through the on-screen instructions. If you found this verification process complicated, then try a different process from the other few options.

Once you have done everything right including setting up your preferred domain, go to your Index Status to observe how many URLs have been indexed by Google over the past year.

Hence, you will easily understand if Google is indexing your pages or not. On the advanced panel, you will get an idea about the number of pages that have been by the robots.txt file. So, you must use at least one SEO plugin to maintain your site properly even if you are a total expert in SEO.

2. Create Sitemap and submit them to Google Search Console:

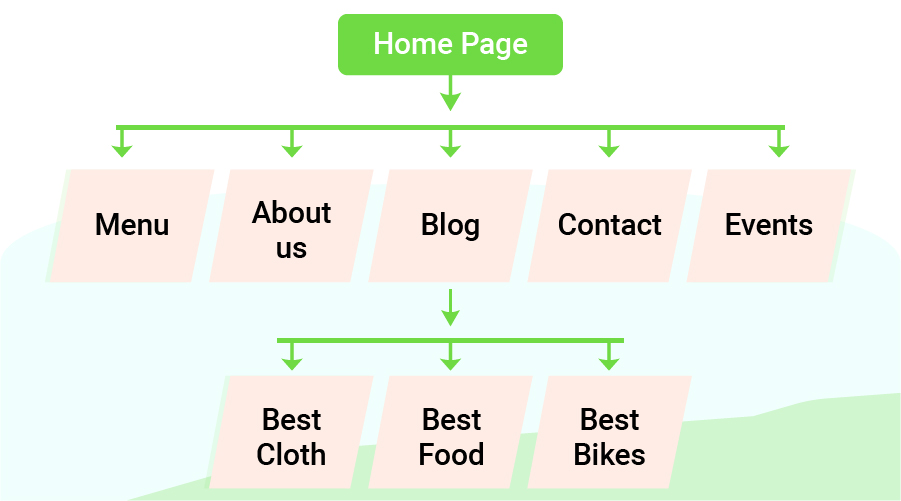

Okay! The very first step has 2 sections. They are – creating sitemaps and submitting them to Google Search Console. You need to follow them accordingly. A sitemap is basically an XML document that works like a map so that the crawlers can identify the proper pages of your site.

An ideal XML sitemap is a key ingredient for successful indexation. Because of XML sitemaps, crawlers find the pages of your website, they know where the new content generates, analyze the important pages, and so on.

Although crawlers are smart, it can take up to 24 hours for new blog indexing without appropriate sitemaps. You sure can understand that it is called a sitemap because it is a map of your website. Therefore, you can’t expect to get a quick index without a map.

Based on sitemaps, search engines like Google understands when there are new pages or changes on your website. There are tools like Screaming Frog, Google XML Sitemaps that can provide similar results by creating XML sitemaps for your website.

Once you have created sitemaps for all of your pages, you have to submit them to Google Search Console.

Why do you need to submit the sitemaps to Google Search Console?

After creating sitemaps, if you don’t submit them, Google will never know about the pages that you want to index. It will also help Google to find the important pages after the crawlers have successfully gone through each page.

Inside the Search Console homepage, go to Google Search Console > Site Configuration > Sitemaps. You may see an “Add/Test Sitemap” button, which is located at the top right corner. Hit the button and paste the URL of your sitemap.

You will notice the status of your sitemap is showing ‘Pending’ after hitting the submit button. And you will notice the number of pages that have been submitted vs the number of pages that have been indexed, once the sitemaps are approved.

3. Use URL inspection tool for Google index sites:

The URL inspection tool is one of the vital tools for Google index sites. If you want to check whether a specific page on your website has been indexed or not, then we can assure you this is the ultimate tool. Being a tool of Google, its functionalities are very sharp.

While you are confirmed that Google has indexed your site, it is better to use this inspection tool to double-check because you can never be too sure. In order to find out if a particular page is indexed on Google, type the URL of that page into the URL Inspection Tool. You get a “URL is on Google” message if the page is properly indexed.

On the contrary, if you see either a “URL is not on Google” message or a ‘N/A’ message, then the page is still not indexed.

4. The proper configuration of the “robots.txt” file:

Google will start to proceed to crawl your website depending on the instructions on the robots.txt file. There are instructions on the robots.txt file that suggest Google or other search engines which part of your site should be crawled and which part shouldn’t.

Therefore, if Google or other search engines find the robots.txt file for your website, it will crawl your website abiding by the instructions. If there is no robots.txt file or if an error occurs while accessing the robots.txt file, then Google or the search engine will process crawling without any instruction.

Optimize the crawl budget by removing the crawl blocks in the robots.txt file. One of the main reasons for not indexing your website is the crawl blocks that are inside the robots.txt file.

In order to stop this, you need to check the robots.txt file, which is located in the root directory of your website like yourdomain.com/robots.txt

Now, you need to remove either any of these code snippets –

Disallow: /

Disallow: /

Now, what do these codes in the robots.txt file tell the search engine?; They tell the Google bots not to crawl any pages on your website. We are sure you don’t want that to happen. Simply remove these codes to solve the issue.

Sometimes, a particular page of your website may not get indexed because of this same reason. If this is the case, copy and paste the URL of the page into the URL inspection tool. To reveal more details, click on the Coverage block. And then, look for “Crawl allowed? No: blocked by robots.txt”.

If you see the exact status, then that page is blocked for crawling in the robots.txt file. You need to check the robots.txt file and search for the ‘disallow’ word on the file and remove the word where applicable. It will look something like this –

5. Generate powerful internal links on your site:

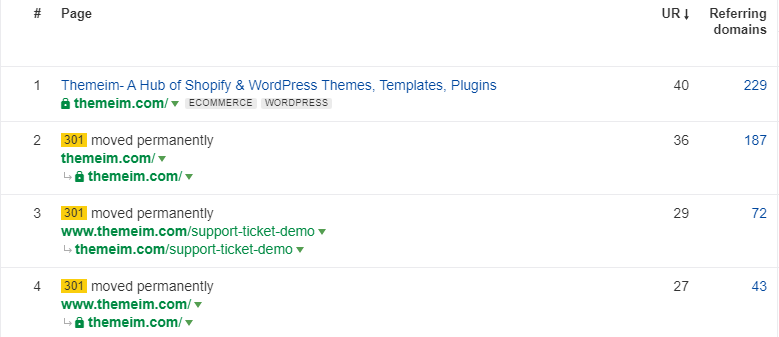

Generating powerful internal links in articles is like giving equality for URL Rating (UR). The page that has the highest UR, is the most authority.

So how can you measure the UR of a particular page?

It’s so easy. Just head over to Ahrefs Site Explorer and see the “Best pages by links” report

This generates a report of all the pages of your website prioritizing the URLs. The page with the highest authority will be on top. We will share a secret with you.

If you are bothering with indexing for some pages, you might want to insert the page links into the most authoritative page on your website. As Google likes to crawl the pages more frequently those are more important, chances of indexing those pages will increase if you generate internal links.

Remember to insert the links in the most appropriate and natural way possible. Also, both of the pages that are interlinked, must discuss relevant topics. It is not a good idea if you insert a link of a page to a powerful page that has no connection.

6. Get rid of poor-quality pages and unnecessary URLs:

Google doesn’t like low-quality pages because Google has to send too many crawlers to verify those pages. Now, how do you measure a poor-quality page? Based on some aspects, you can tell a particular page is low on quality.; Google says –

“Wasting server resources on [low-value-add pages] will drain crawl activity from pages that do actually have value, which may cause a significant delay in discovering great content on a site.”

You can probably guess that it takes more time to crawl the low-quality pages for Google. However, Google also stated that publishers won’t have to worry about the crawl budget. It will take quite a while if Google needs to crawl a massive number of pages.

However, it won’t take long if the total number of pages isn’t that much unless there is a significant number of low-quality pages on your website.

That is why removing unnecessary pages from your website is ideal. It will index your website quickly. Several methods can be followed to serve a poor-quality page, such as –

- Manually Check and Update the page:

When the page has some value but generates a little traffic, it can possibly be that the page isn’t optimized for a relevant keyword. Perhaps, changing the keyword for a more relevant option can better optimize the page. This is highly recommended for getting traffic from organic searches.

If the page is a core one earning revenue. Or, you don’t want to change it as you and your audience are used to seeing the page the way it is. Then, just leave the page as it is. This would be the wisest decision.

- Automate Content Audit Process:

When improvement isn’t showing after manually checking a particular page, automating the content audit process can be a better solution. Moreover, manual checking can be very time-consuming sometimes.

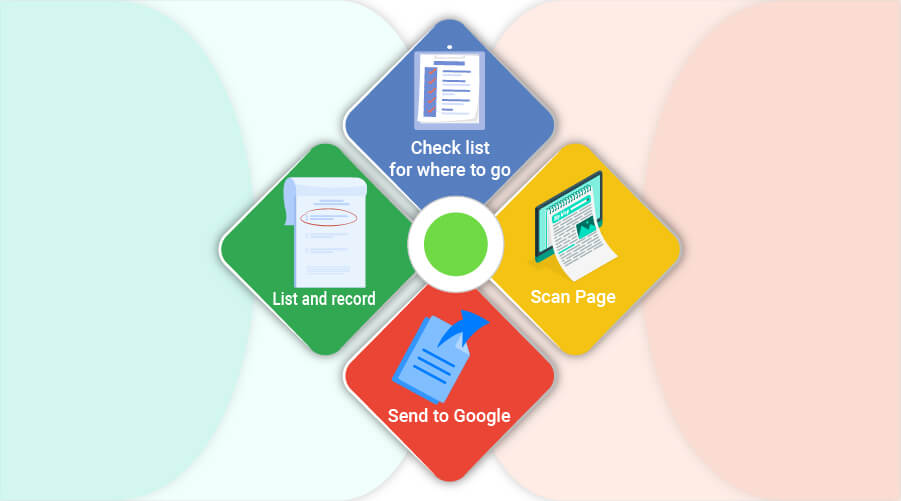

Some suggest content automation through Google Sheets or Excel. In order to do this, you have to insert some data in Google sheet or Excel, and use the sheet in an intuitive manner by following some steps. Get rid of irrelevant and low-quality pages by using a content audit template.

- Noindex:

You can set a particular page to noindex if it has some value to you, but not search engines. Pages like the landing page, thank you page, offer page can cause such scenarios.

- Delete (404):

When a page is completely useless and has no value to you, your audience, or search engines; it can be deleted. However, you should never delete a page unless you are fully determined and have manually reviewed it hundreds of times.

A page can be deleted when it doesn’t generate any traffic or doesn’t have any backlinks. Still instead of keeping the deleted pages to 404, redirecting them will be a smart decision.

- Redirect (301):

Rather than deleting a page, redirecting it will be clever. Because even if the page has no value to search engines and generates no traffic, it may have backlinks from old posts. Perhaps, you can consider the speech of John Mueller:

“There are two approaches to actually tackling this [low-quality content]. On the one hand, you can improve your content—and from my point of view, if you can improve your content, that’s probably the best approach possible because then you have something really useful on your website and you’re providing something useful for the web in general…“

- Using Robots.txt file to block crawling:

Editing the robots.txt file, you can turn off Google crawling for a specific page. When do you need to follow this step?

When you figure a page or a set of pages has value to the viewers but not to search engines, then such a step can be followed. For example, press releases or archives can be considered.

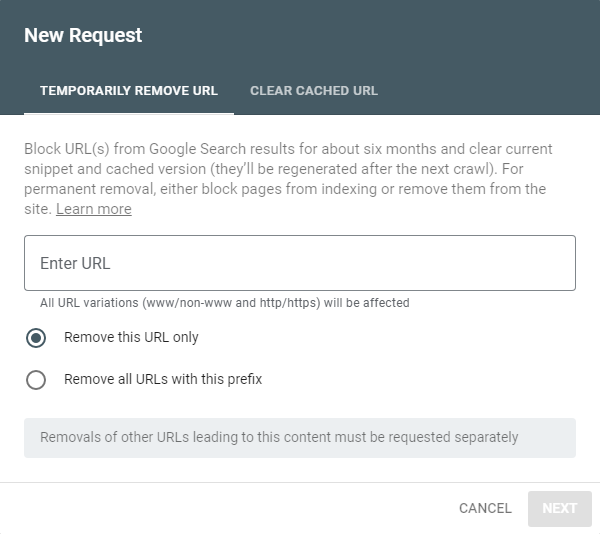

Only blocking low-quality pages won’t be enough. You need to get rid of unnecessary URLs as well. For a temporary removal of URLs, Google Search Console uses the Remove URLs feature.

This feature is very effective if you want to stop showing unnecessary URLs in search engines. The good thing is, the removed URL won’t be lost forever. So, if you have pages on your website that are under construction and you don’t want to show them in search engines, this method can be very effective.

7. Get rid of noindex and canonical tags:

Both noindex and canonical tags need to be removed from your website. We will discuss the methods of removing both noindex and canonical tags. Okay, first we are going to discuss the process of removing noindex tags. You should know that if you tell Google not to index a specific page of your website; then that page won’t be indexed.

You may be wondering, what is the use of noindex tags?

If you want to keep any page of your website private or if you think a page has not yet been built for uploading, the noindex tag will no longer introduce that page to Google.

In order to remove noindex tags from a particular page, we will demonstrate 2 methods –

- Method 1: Removing meta robots tag

First, you need to look for pages that have noindex meta tags. To do that, you might want to use Ahrefs’ Site Audit. Go to –

Indexability report >> “Noindex page” warnings

Crawl through to see all the affected pages. The pages with noindex tags have the following in their

sections –

<meta name=”robots” content=”noindex”>

<meta name=”googlebot” content=”noindex”>

Google won’t index the pages that have the above meta tags unless you remove them. Simply removing these meta tags will permit Google to index the pages.

- Method 2: Removing X-robots tag

Just like finding noindex meta tags, you can run a crawl in the Ahrefs’ Site Audit tool. In the Page Explorer, use the “Robots information in HTTP header” filter –

X-robots tags can be the issue that is blocking Google from indexing a particular page on your website. Pages containing such HTTP response headers can be found in the URL inspection tool in Search Console. After entering the URL of a page having an x-robots tag in the Search Console, you will find something like –

“Indexing allowed? No: ‘noindex’ detected in ‘X‑Robots-Tag’ http header”

By using PHP or a server-side scripting language, you can get rid of the x-robots tag header. Google will proceed to crawl that page as soon as you remove this tag. Changing server configuration can also solve this problem sometimes.

What is a canonical tag and why do you need to remove rogue canonical tags –

Now, we will talk about canonical tags. First, we would like to inform you that – a canonical tag may not always be bad. Google finds the preferred version of a particular page based on such tags. A canonical tag may look something like this –

<link rel=”canonical” href=”/page.html/”>

So what is the problem with having canonical tags? Why do I need to remove them?

You will get your answers to these questions once you realize the difference between a normal canonical tag and a rogue canonical tag. Normal canonical tags can be referred to as self-referencing canonical tags. Most pages either have or don’t have them. It suggests to Google that the page has only one preferable version that needs to be indexed.

The problem comes when a rogue canonical tag appears. Because it can urge Google to a version of a particular page that doesn’t even exist. As a result, the original page may never get indexed.

The Ahrefs’ Site Audit tool can help you to quickly find such types of pages. Just run a crawl and go to the Page Explorer. Use the settings below to find all the rogue canonical tags across the entire site.

After filtering, if you get some results; then you should investigate more as chances are high that these pages contain canonical tags or they shouldn’t be in your sitemap in the first place.

Google’s URL inspection tool can also be used to find canonical tags. After inserting the URL of a particular page, if you find a warning saying “Alternate page with canonical tag”; then the page may refer to another page that shouldn’t exist according to your opinion.

If the canonical tag is appropriate, you should see something like below in the Coverage.

8. Regularly check crawl errors and fix them

This step is an advanced step and it concerns maintaining indexing frequency. If you like to know why crawl errors occur? We will say – Google bots or crawlers may encounter problems while crawling the URLs of your website. You should frequently keep an eye out for crawl errors, at least one a week is better.

Google Search Console can help you detect such errors. You need to open the Search Console and then open the crawl status report by going to –

Settings >> Crawl Status >> Open Report

Crawl errors include two types of errors like server errors and “not found” errors. In order to do anything meaningful, you need to have a clear idea about server errors and “not found” errors.

- Server Errors:

When you will deal with server errors, the HTTP request may not have a proper connection with the server. Either the server didn’t get a complete request or your site couldn’t establish a proper connection with the server.

- Not found Errors:

If your website contains a page where the server can’t find anything, that page may show the 404 error message. We are all more or less familiar with this problem and this error message indicates that the requested resource can’t be found by the server, as if it never existed.

The crawl status will also show you the crawl frequency. It is something worthy to keep an eye out for constantly. The crawl frequency suggests to you how often Google indexes your pages. If you find that the frequency rate is going up, then it’s a positive sign. It means Google is indexing your pages even more.

If the frequency is going down, then you need to publish more content to make your pages available to Google for indexing.

Three things need to be checked regularly if you want to keep the indexing flow at a sustainable level. They are – Crawl errors, Crawl status, and Average response time.

9. Make sure your pages are valuable and blogs are powerful:

According to John Muller’s tweet –

As you can see from the tweet that generating awesome and inspiring content is crucial and it just might be the important part to get your website recognized by your audience. If your content is not truly valuable, it won’t do much good with just technical improvements.

Google doesn’t value the pages that don’t meet its requirements. Therefore, they usually don’t get indexed.

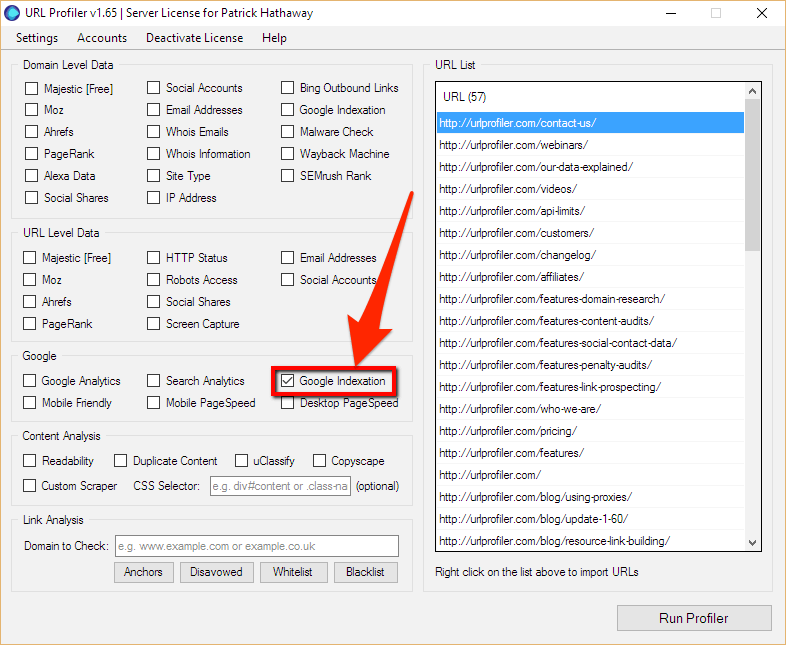

Be honest about reading your content and ask yourself if you have found them valuable and adaptable as a reader. If not, then you obviously need to provide high-quality blogs to your customers. And when we are talking about content, we are particularly addressing the pages of your website. Ahrefs’ Site Audit tool and URL Profiler can help you find low-quality pages.

After you have applied the above settings in the Page Explorer of Ahrefs’ Site Audit tool, you get a list of pages with almost no organic traffic. There is a possibility that some of these pages aren’t indexed yet.

Now, you can use the URL Profiler to check whether Google has indexed the pages that are confusing to you. Export the list of URLs that you got from Ahrefs’ Site Audit tool and paste them into the URL Profiler and check the Google Indexation box.

Image Credit: Ahrefs

Upgrade the overall layout and content of the pages that are still not indexed and submit them again for reindexing.

Don’t forget to change the pages are identical as Google will hardly index duplicate or near-duplicate pages. In the Site Audit tool, check the Duplicate Content report to find duplicate pages on your website.

Once you have fixed issues with low-quality pages, it’s time to generate some powerful blogs. The more content your blog includes the more it is easier for crawling. As a result, it won’t take much time to index all the pages of your website.

While publishing blogs, it is important to keep up with the current trend. Make sure your blogs are not only filled with content but also informative containing the most recent data.

You have to show Google that your website shares the most recent and fresh content that relates to the current events or activities. Google loves to ensure the searchers find what they are looking for and find it in the most informative way.

This illustration shows that websites get 434% more indexed pages along with 97% more inbound links when they maintain regular blogging. B2B companies that are engaged with blogging, get 67% more leads. Now, this is productive.

Therefore, no matter what your business is about or what your niche is, you should start blogging. It’s a great way of marketing that is efficient in many ways.

10. Don’t forget to share pages on social media and campaigns:

Social media platforms and paid campaigns can be a really effective medium for getting readers. First, you need to build dedicated pages on each social media platform for your business.

Make sure you obey the rules and regulations while creating pages on different social media. For instance, you can’t create a subreddit on Reddit unless you obtain at least 50 karma.

Sharing pages in Quora will not help indexation but also drive enormous traffic. We can assume you can already feel the importance of social media marketing.

If you do feel the same way, we will share a bonus tip with you. Do you know sharing content on social media has a significant SEO benefit?

Why? Because it generates backlinks to your content. Therefore, it will help your site to grow organic traffic.

Other than Reddit, Quora, LinkedIn, and Pinterest; you can also consider Twitter as one of the reliable social media platforms. Unlike Facebook, Twitter is more verified. That’s why more people trust Twitter than Facebook.

For example, you can run a Twitter poll and insert a relevant post link. We can guarantee that you will get more visitors compared to the previous number of visitors. Being a powerful network, Google regularly crawls on this social media platform.

Image Credit: Twitter

As for the importance of sharing posts on Facebook, we hope you understand yourself better than us. So, start sharing posts on Facebook as well as on Twitter. Share your website’s content regularly on high-traffic sites.

If you want or have the financial capability, you can also hire a social media marketing agency to boost your posts and increase your site’s traffic.

11. Don’t forget to index your site in other search engines:

Yes, Google can be the king of all search engines. But indexing your site to other search engines like Bing, DuckDuckGo, Baidu, Yandex, Yahoo might not really be a bad idea.

Although Google might be the indisputable champion acquiring 88.14% of the world’s market share, other search engines have a significant amount of shares as well.

And the status of overall traffic and mobile traffic is worth noticing for these search engines. Perhaps, this chart just might give you an accurate idea –

Nowadays, most website owners or bloggers skip this step. In their opinion, giving time to manually indexing websites for other search engines is not really necessary. They will rather use other strategies for indexing and ranking.

To some extent, they are right. Because, if your website has already been indexed by Google for several weeks, it’s quite normal that other search engines have already found it. Updating sitemap for your URLs, you can ask Google to recrawl by using the URL inspection tool.

However, there was a time when Google used to permit direct URL submission for indexing. But that time is over now.

With that being said, it won’t take much time just for submitting your web site’s URL to Bing, Yandex, Yahoo, or other reputed search engines aside from Google. You should also know that it doesn’t have any negative effect on your SEO.

If you consider the average conversion rate for all the search engines, you might be encouraged to index your website to other platforms. A conversion rate gives you an idea about the percentage of visitors who end up purchasing something while visiting a website.

Here is a bar chart showing the average conversion rate of the search platforms by advertising channels.

While Google may not admit how it uses social media links for search result rankings, Bing directly tells that it uses social media links and they are called “social signals”. Therefore, you can’t ignore the fact completely.

12. An RSS feed might do the trick sometimes:

If it is possible, you can create an RSS feed. Although it’s not completely necessary, creating one won’t do any harm. With the progress of SEO strategies, site owners prefer to have their own list of emails. When we say emails, we mean emails from preferable clients.

Not all readers will be your faithful customers. How do you know who are the preferred clients? It’s basically your target audience who come to your site and spend more time. RSS feed can play a vital role in getting your preferred customers.

“Really Simple Syndication” or “Rich Site Summary” is the full form of RSS. It is an XML-based automated feed and when you publish a blog on your website, this content gets updated. While setting up an RSS feed, a few things should be followed –

- Include valuable infographics or visual works as much as you can so that the readers get more attracted to your content and spend more time. That is why make sure your feed includes important visual works or infographics.

- It is better to only include excerpts in your RSS feed. Why? Because most of your blogs will be more than 2000 words for sure and showing all these words is not a good practice.

- Decide where you want to put the RSS feed. You can place it at the sidebar, at the end of the posts, or in the footer section. And don’t forget to add other subscription options at suitable places. ;

Although most blogging platforms come with built-in RSS feeds, many think that the era of RSS feed has gone, especially for blog indexing. Well, there is a reason for that. Since the breakdown of Google Reader in 2013, the use of RSS feeds has decreased significantly.

But we still believe it’s an easy way for getting a large number of users in a short amount of time. An RSS feed has the ability to make readers subscribe to you without receiving their email addresses. It is an efficient way for subscriptions as some people don’t like to give up their email addresses.

Using tools like Feedly or Google’s own RSS management tool Feedburner can help to make your RSS feed more productive. You can also make your own custom RSS feed. Perhaps, this video might help to make a custom RSS feed –

13. Update old articles from time to time:

Once an article is indexed by Google and gets a good position in Google ranking, that article gets crawled frequently. Updating old content is mandatory to maintain a good position in Google ranking.

You may have written a good article about a topic. Although many things have changed over time, the article will gradually lose its ranking if you do not mention the updates in your article. Remember, it doesn’t take long for information to get outdated, especially in the accelerating marketing world.

Furthermore, Google implies new rules from time to time. These rules are enough to collapse not only your website but also your entire business. Therefore while you provide fresh articles maintaining those rules, you need to ensure the structures of your old content are being maintained accordingly.

Here are some tips that will help you to update your old content in the most prominent way –

1. Remove or update outdated information:

First thing first, get rid of all the outdated information or update them with the new one. Google doesn’t like giving wrong information to users.

Along with updating information, update the terms that are outdated. For instance, if you had written Google Webmaster Tools in any of your previous articles, replace them with Google Search Console.

2. Detect the posts that need to be updated:

Depending on the time and publish date, make a list of older posts that need updating. We are not suggesting to change the post completely, we are suggesting to change the terms that are highly informative and the terms that change over time. If you have a list of older posts, scheduling for updating them will become easier.

3. Maintain a schedule for updating:

Updating old content not only impacts ranking but also site indexing. It is said that updating old content can increase organic traffic by 111%. So, maintain a schedule for updating them. To keep everything with the flow, it is better to update a website at least 3 times a week.

4. Provide fresh and recent data in the older posts:

Suppose, you have written a post 2 years ago and you gathered the data that were acceptable in that year. After 2 years, the data may have changed a lot. But you didn’t update the data. That article will soon lose its position from Google ranking.

5. Fix broken links or replace them with new links:

Sometimes, links of previous articles may break or you need to generate new links for old articles. If the link to the old article is broken then you are forced to fix it. But if you get a link to a better resource for an old article, you can replace it.

6. Include new article links inside old articles:

Insert the links of newly published content inside old articles. While inserting links, make sure the topics of both articles (the old and the new) are relevant. Otherwise, you can add additional sentences that can act as references to insert new content links.

7. Update the methods of solutions:

Once you have created a list of articles that need to be updated, go through them carefully. Regulate whether the solutions that you have provided in the previous articles are effective for the current generation.

If not, update the solutions or methods. Or more importantly, update the viewpoint of your previous articles.

Additional Question:

1. What is crawling for search engines?

Crawling is the process of discovery for search engines. It is the discovery process of finding the new and updated content of all the websites. It is not obvious that content must be a bundle of paragraphs. It can be an image, a video, a webpage with services, an instruction pdf, and others.

The search engines send their team of bots to start with a number of web pages. The bots then find new URLs on those web pages. By this process, the bots or crawlers index new content in a massive database called Caffeine.

This content is used as a reference for providing relevant solutions to searchers. And Google index sites based on the process of crawling.

2. What is an index for a search engine?

To put it simply, an index is a massive database containing billions of data for search engines. When Google indexes a page, it means the page has already been crawled by the spiders or Google bots. At the same time, the URL of that page is included in the database.

Google’s index stores all the information from the URLs that have been crawled. Google follows its own algorithm for sorting out information from this huge database and previews the pages to a user when he performs a search.

Google bots or spiders crawl new pages regularly and update information in the database. So, this index is constantly growing. A report says,

“Google indexing has made up over 130 trillion individual pages”

3. How to figure if your site is indexed or not?

If it’s been a week since your website was launched, chances are your website is already indexed. If this is not the case, then we will show you the easiest method without using any tools.

Type (site:yourdomain.com) in google. You will see a list of results indicating the pages that are from your website. For example – we have typed (site:themeim.com) in Google like below-

If you see something like this, then congratulations! Your website is already indexed. If you don’t see something similar, then you need to look for some suggestions as your website hasn’t been indexed by Google yet.

4. Are there any benefits of getting a quick index from Google?

Of course, there are benefits. To put it simply, the first step of getting a suitable rank in Google is to index your site as quickly as possible.

Because of the constant crawling of Google bots, you can expect to get indexed by Google one day or another. If that’s the case, then why do you need to put so much effort just to help the crawlers to index your site? Won’t they find it anyway?

As we have already explained, quick site indexation is needed for better ranking. The equation is like – Quick Website Indexation = Better Rank in Google

Although there can be various methods for quick indexation, 51% of the websites appear in the search result from organic traffic. However, achieving a higher rank doesn’t happen overnight. In fact, the older pages often hold the top positions.

But that doesn’t mean that new pages can’t achieve better ranks. Well-organized and informative pages take less time to get indexed and get better rankings.

As a result, the sooner Google index your pages, the sooner they will start competing for ranking.

Therefore, the equation goes like this –

Indexing ≠ Ranking

Ranking = SEO-Friendly Content

Final Words:

At the end of this article, we would highly recommend you follow these 13 steps to get Google indexing your website. We can guarantee that by following the above steps, all the pages on your website will be indexed if they are not already.

All it takes is a little effort for indexation and for ranking. If you can ensure that you are putting everything from your end, then there is no stopping you from indexing and ranking. The common obstacles to not indexing your website? are – hidden technical issues and low-quality content. If you encounter such issues, you need to upgrade the content and consult with experts for fixing hidden technical issues.